fusetweet vs Seedance 2.0

Side-by-side comparison to help you choose the right AI tool.

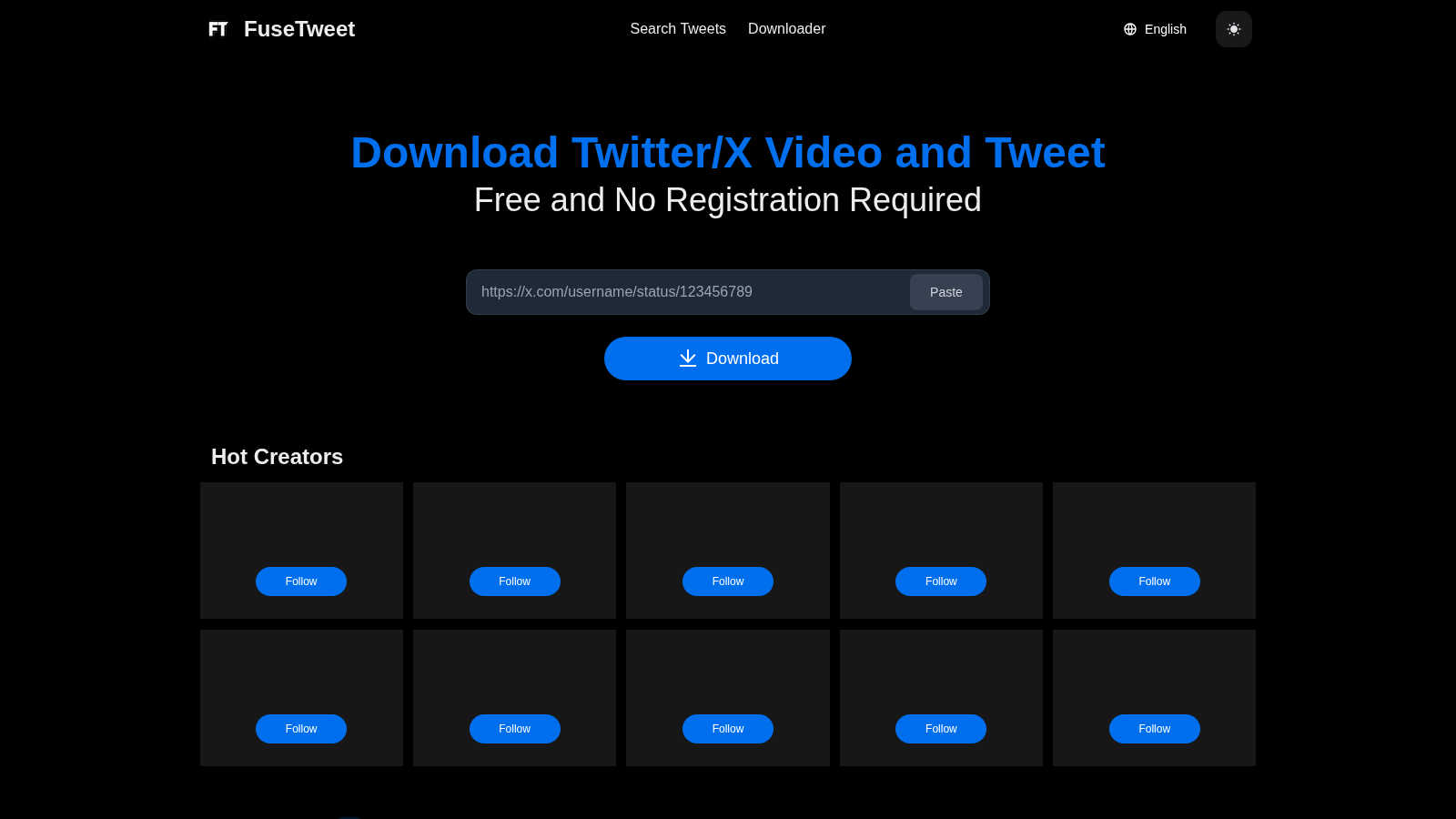

fusetweet

Save, edit, and repost any Twitter video or tweet for free.

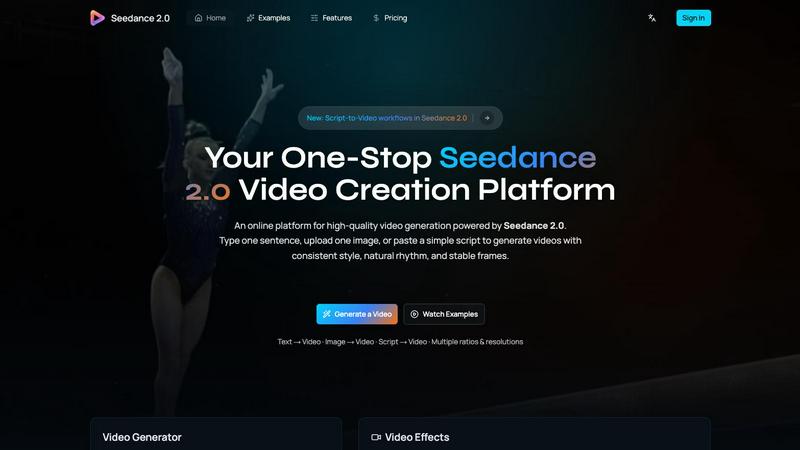

Seedance 2.0

Seedance 2.0 generates hyper-realistic videos from text or images with consistent style and fluid motion.

Last updated: February 28, 2026

Visual Comparison

fusetweet

Seedance 2.0

Feature Comparison

fusetweet

Local Browser-Side Processing

All core operations, from fetching the original tweet data to processing translations, occur directly within your web browser. This means no data is sent to a remote server for analysis, and your X API key is never transmitted over the internet. This architecture guarantees privacy, reduces latency, and ensures your workflow continues uninterrupted even if the fusetweet service experiences downtime.

Integrated Translation Editor

Instead of using a separate translation service, fusetweet builds translation capabilities directly into its editing interface. This allows you to fetch a tweet and instantly see a translated version, which you can then fine-tune and adjust for nuance, tone, and cultural context before posting. This integrated approach saves time and improves the quality of the final output compared to copying and pasting between different apps.

Direct Write-Only API Integration

fusetweet uses a standard, write-only API key from X/Twitter, which you provide. This bypasses complex OAuth authorization flows and eliminates the need for a server-side middleman to handle your credentials. You maintain direct control over your API key, enhancing security and transparency, while the tool simply acts as a client-side interface to publish the content you've prepared.

Unified Content Adaptation Workflow

The tool consolidates the entire repurposing journey into one seamless flow: input a URL, edit text and media, translate if needed, and publish. It removes the friction of switching between a downloader, an image editor, a translation tool, and a publishing dashboard. This unified workflow is designed for efficiency, minimizing errors and saving significant time for anyone regularly adapting tweet content.

Seedance 2.0

Multimodal Video Generation

Seedance 2.0 accepts multiple input formats to guide creation. You can generate a complete scene from a single text sentence, animate and extend a reference image while preserving its composition and style, or provide a simple script to shape story beats and pacing. This flexibility allows creators to start from their strongest idea, whether it's a written concept, a visual mood board, or a narrative outline, and transform it into a coherent video.

Integrated Audio-Video Synthesis (Pro)

The Pro version of the model features a fully integrated audio generation pipeline. In a single forward pass, it creates video synchronized with realistic sound effects, background music, and even speech synthesis with multilingual lip-sync. This eliminates the need for separate audio post-production steps, streamlining the workflow and ensuring perfect alignment between visual action and auditory elements, from dialogue to ambient sounds.

Physics-Aware Motion Modeling

The model excels at simulating real-world physical dynamics with remarkable realism. It understands and renders complex interactions like cloth fluttering naturally in the wind, water splashes adhering to fluid physics, the dynamic behavior of flames and smoke, and intricate particle effects. This deep comprehension of physical principles results in motion that feels authentic and believable, elevating the quality beyond simple animation.

Temporal Consistency Architecture

At its core, Seedance 2.0 employs a novel diffusion transformer architecture specifically designed for temporal coherence. Its advanced temporal attention mechanism reuses motion cues across frames, ensuring consistent character identity and proportions, stable lighting and geometry, and smoother transitions. This technical foundation is what produces the model's signature stable frames with significantly reduced flicker and visual artifacts.

Use Cases

fusetweet

Cross-Language Content Repurposing

Social media managers targeting global audiences can quickly translate and culturally adapt successful tweets from one language to another. For instance, a viral English tweet can be fetched, accurately translated and localized for a Spanish-speaking audience, and scheduled for posting—all within a single, streamlined workflow without leaving the browser.

Curating and Enhancing Tweet Threads

Content creators and journalists can use fusetweet to capture insightful tweet threads, condense or rewrite them for clarity, and republish them as a polished summary or commentary on their own profile. This is ideal for adding value to existing discussions or archiving key points from a public conversation in an enhanced format.

Rapid Response and Newsjacking

For brands and influencers engaged in real-time marketing, speed is critical. fusetweet allows a team to quickly fetch a relevant trending tweet, adapt the messaging to align with their brand voice, and publish a timely response—all before the moment passes. The local processing ensures no time is lost on uploads or external approvals.

Creating Accessible Content Versions

Individuals and organizations can use the tool to rewrite complex tweet threads into simpler, clearer language for better accessibility. This can involve breaking down long threads, simplifying jargon, and adding alt-text descriptions for images directly in the editor before reposting the more accessible version.

Seedance 2.0

Social Media Content Creation

Creators and influencers can rapidly produce high-quality, engaging short-form videos for platforms like TikTok, Instagram Reels, and YouTube Shorts. By turning a simple prompt or a single photo into a dynamic, branded clip with synchronized audio, they can maintain a consistent posting schedule and visual style without requiring extensive production resources or editing skills.

Prototyping for Film and Animation

Filmmakers, storyboard artists, and animators can use Seedance 2.0 to visualize concepts and iterate on scenes quickly. The ability to generate coherent motion from a script or image allows for rapid prototyping of shots, testing of visual styles, and creation of compelling pitch reels, significantly speeding up the pre-production process and enhancing creative collaboration.

Marketing and Advertising

Marketing teams can generate product demos, explainer videos, and dynamic advertisements tailored for different platforms and aspect ratios. The model's consistency ensures brand elements, colors, and character identities remain stable across multiple video assets, enabling efficient creation of cohesive campaign materials that capture audience attention with professional polish.

Educational and Training Material

Educators and corporate trainers can transform static images or text-based lesson plans into engaging animated videos. Complex concepts can be illustrated with clear, coherent motion and supplemented with synchronized narration or sound effects, making learning materials more accessible, memorable, and effective for diverse audiences.

Overview

About fusetweet

fusetweet is a streamlined, browser-based tool designed for content creators, marketers, and social media managers who need to efficiently repurpose and adapt content from X/Twitter. It solves the tedious, multi-step process of manually copying tweet text, downloading images, and then recreating posts for different audiences or platforms. The core value proposition is a single, secure workflow that takes a tweet URL and outputs an edited or translated version, ready for republication, entirely within your browser. This eliminates the need for file uploads, software downloads, or mandatory user sign-ups, prioritizing speed and simplicity. fusetweet uniquely bridges the gap between simple thread archiving tools and content schedulers by focusing on the crucial intermediate step: content adaptation. All processing, including AI-powered translation, happens locally on your device, ensuring your API key and data never leave your browser, offering a level of privacy and security uncommon in social media tools.

About Seedance 2.0

Seedance 2.0 is a revolutionary AI video generation model developed by ByteDance's Seed research team, representing the cutting edge of multimodal content creation. It transforms simple inputs—a text prompt, an image, or a script—into hyper-realistic, cinematic-quality video sequences. Designed for creators, marketers, filmmakers, and businesses, its core value proposition lies in delivering unmatched motion fluidity, temporal coherence, and production-ready quality that feels distinctly human-crafted. Unlike models that treat frames independently, Seedance 2.0 is architected for consistency, preserving character identity, lighting, and scene geometry across every frame to eliminate jarring flickers and unnatural jumps. Its most groundbreaking advancement is the integrated, physics-aware generation of synchronized video and audio within a single model pass, a capability that sets it apart in the competitive landscape. This tool is for anyone seeking to produce stable, coherent, and visually stunning video content with unprecedented speed and creative control, moving beyond experimental clips into the realm of professional storytelling.

Frequently Asked Questions

fusetweet FAQ

Is my data secure with fusetweet?

Yes, security is a foundational principle. All processing occurs locally in your browser. The tweet content, any translations, and most importantly, your X API key, are never sent to fusetweet's servers or any third party. You interact directly with X's API from your own machine.

Do I need to install any software?

No installation is required. fusetweet is a web application that runs entirely in your modern browser (like Chrome, Firefox, or Edge). There is no need to download or install any desktop software, plugins, or mobile apps.

What do I need to start using fusetweet?

You need two things: a tweet URL you wish to adapt and a valid X/Twitter API key with write permissions. The tool will guide you on where to obtain this key. No account registration with fusetweet itself is necessary.

Can I edit images from the original tweet?

While the provided context emphasizes editing and translating text, a core function is handling media. The tool fetches the images from the tweet URL, allowing you to republish them with your adapted text. For advanced image editing (cropping, filtering), you would likely use a dedicated image tool before or after using fusetweet.

Seedance 2.0 FAQ

What makes Seedance 2.0 different from other AI video models?

Seedance 2.0 distinguishes itself through its foundational focus on temporal consistency and integrated multimodal generation. Its diffusion transformer architecture is specifically engineered to maintain coherence across frames, drastically reducing flicker. Most notably, its Pro version can generate synchronized video and audio—including sound effects, music, and lip-synced speech—in a single pass, a unified approach not commonly found in other models that often treat audio as a separate post-processing step.

Can I control the aspect ratio and resolution of the videos?

Yes, Seedance 2.0 provides controls for both aspect ratio and resolution to suit different platforms. You can choose from standard ratios like 9:16 (vertical), 1:1 (square), and 16:9 (widescreen). The platform also offers various quality options, allowing you to generate content optimized for everything from social media feeds to presentations requiring higher clarity.

How does Seedance 2.0 maintain character consistency?

The model utilizes a dedicated character consistency module within its temporal attention framework. This technology actively preserves key identity cues—such as facial features, clothing details, and body proportions—across every frame of the generated video. This ensures that a character introduced at the beginning looks and moves like the same character throughout the scene, even during complex motion.

What is required to generate a video?

To generate a video, you need to provide a primary input, which can be a text prompt, an uploaded reference image, or a script. You then select your desired parameters like model version (e.g., Seedance 1.5 Pro), aspect ratio, duration, and whether to enable features like audio generation. The process is designed to be intuitive, guiding you from concept to final video with clear creative controls.