OpenMark AI vs qtrl.ai

Side-by-side comparison to help you choose the right AI tool.

OpenMark AI benchmarks 100+ LLMs on your task: cost, speed, quality & stability. Browser-based; no provider API keys for hosted runs.

qtrl.ai

qtrl.ai scales QA testing with AI agents while ensuring full team control and governance.

Last updated: March 4, 2026

Visual Comparison

OpenMark AI

qtrl.ai

Overview

About OpenMark AI

OpenMark AI is a web application for task-level LLM benchmarking. You describe what you want to test in plain language, run the same prompts against many models in one session, and compare cost per request, latency, scored quality, and stability across repeat runs, so you see variance, not a single lucky output.

The product is built for developers and product teams who need to choose or validate a model before shipping an AI feature. Hosted benchmarking uses credits, so you do not need to configure separate OpenAI, Anthropic, or Google API keys for every comparison.

You get side-by-side results with real API calls to models, not cached marketing numbers. Use it when you care about cost efficiency (quality relative to what you pay), not just the cheapest token price on a datasheet.

OpenMark AI supports a large catalog of models and focuses on pre-deployment decisions: which model fits this workflow, at what cost, and whether outputs are consistent when you run the same task again. Free and paid plans are available; details are shown in the in-app billing section.

About qtrl.ai

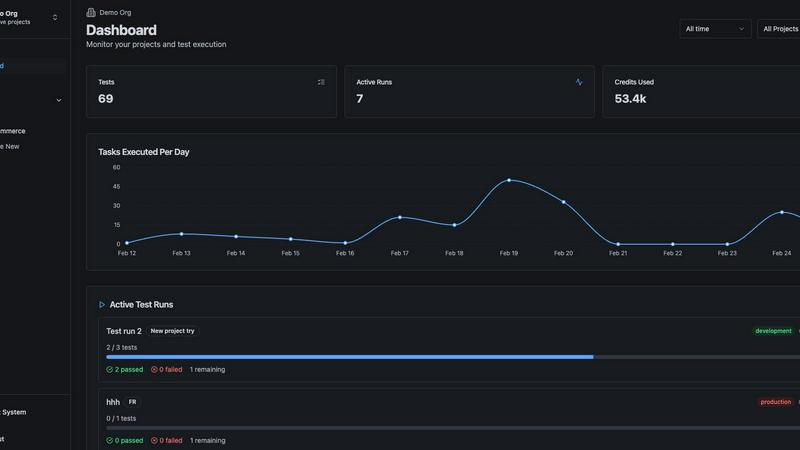

qtrl.ai is a modern QA platform engineered for software teams who need to scale their quality assurance efforts without compromising on control, governance, or trust. It addresses the fundamental tension in software testing: the slow, unscalable nature of manual processes versus the brittle, expensive complexity of traditional test automation. qtrl provides a unified solution by combining robust, enterprise-grade test management with a progressive, trustworthy layer of AI-powered automation. This creates a centralized hub where teams can meticulously organize test cases, plan and execute test runs, trace requirements to coverage, and monitor quality through real-time dashboards. The platform is designed for progression, allowing teams to start with structured manual test management and gradually introduce intelligent autonomous agents. These agents can generate and maintain UI tests from plain English, execute them at scale across real browsers and environments, and adapt as the application evolves. Built for product-led engineering teams, QA groups moving beyond manual testing, and enterprises with strict compliance needs, qtrl.ai offers a trusted, transparent path to faster, more intelligent, and fully governed quality assurance.