Agent to Agent Testing Platform vs AgentSea

Side-by-side comparison to help you choose the right AI tool.

Agent to Agent Testing Platform

TestMu AI validates AI agents for safety, accuracy, and reliability across all interaction modes.

Last updated: February 28, 2026

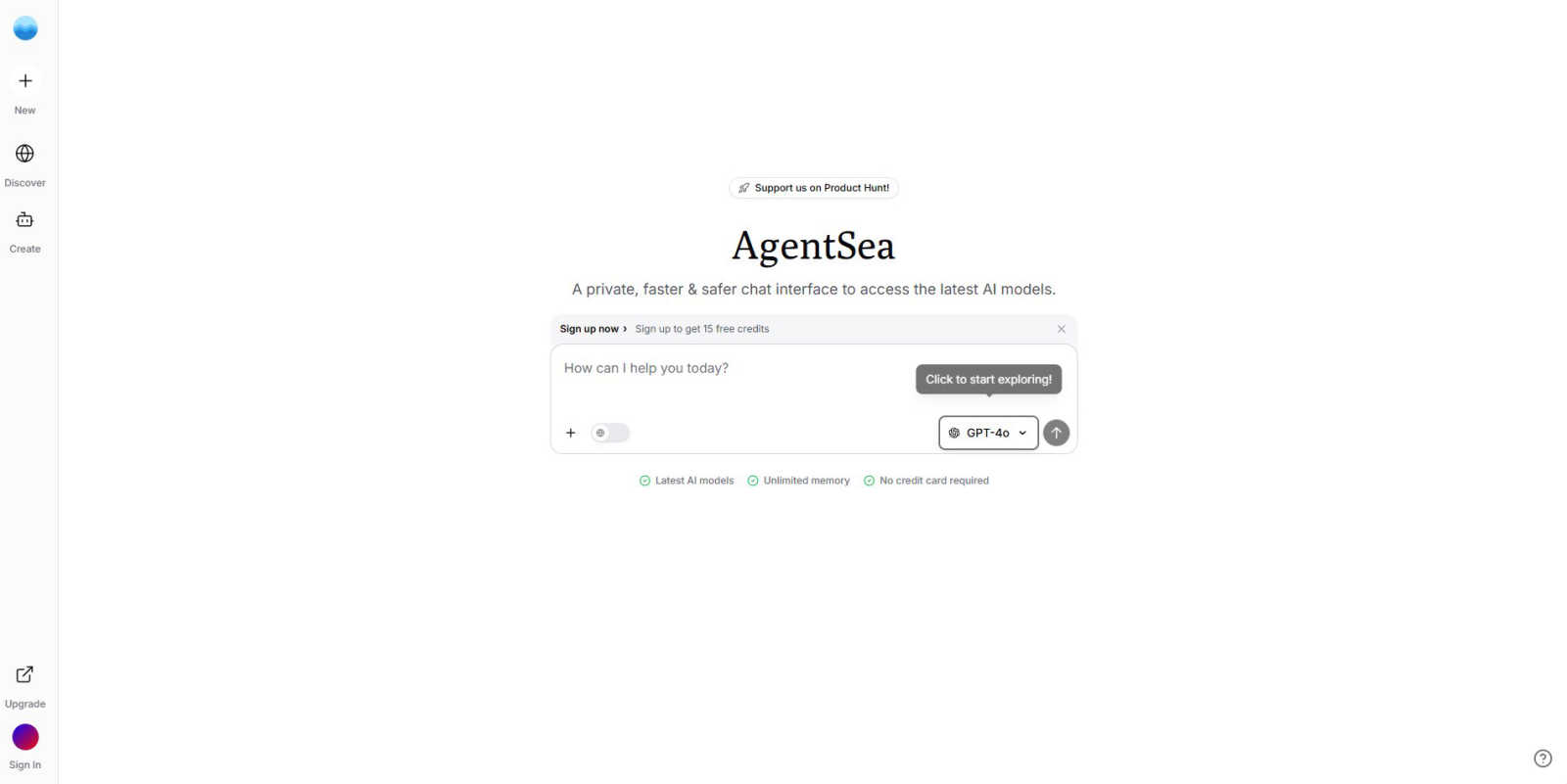

AgentSea

AgentSea is your private hub for seamless, context-aware conversations across all major AI models.

Last updated: March 1, 2026

Visual Comparison

Agent to Agent Testing Platform

AgentSea

Feature Comparison

Agent to Agent Testing Platform

Autonomous Multi-Agent Test Generation

The platform employs a team of over 17 specialized AI agents to autonomously create diverse and complex test scenarios. These agents act as synthetic users, generating a vast array of conversational paths, edge cases, and long-tail interaction patterns that would be impractical to script manually. This ensures comprehensive coverage and uncovers failures that human testers are likely to miss.

True Multi-Modal Understanding and Testing

Go beyond text-based validation. The platform allows you to define requirements or upload PRDs (Product Requirement Documents) that include diverse inputs like images, audio, and video. It tests the AI agent's ability to understand and respond appropriately to these multi-modal inputs, accurately mirroring complex real-world user scenarios and interactions.

Diverse Persona-Based Testing

Simulate a wide spectrum of real human users by leveraging a library of diverse personas, such as an International Caller or a Digital Novice. This feature ensures your AI agent is tested against different user behaviors, accents, technical proficiencies, and needs, guaranteeing it performs effectively and empathetically for your entire user base, not just a homogeneous group.

Regression Testing with Intelligent Risk Scoring

Perform end-to-end regression testing for your AI agent with clear, prioritized insights. The platform provides a risk score that highlights potential areas of concern based on test results. This allows development and QA teams to quickly identify and prioritize critical issues, optimizing testing efforts and ensuring stability through continuous updates and deployments.

AgentSea

Unified Model Access

Access a vast selection of leading AI models from a single interface. This includes top-tier proprietary models and innovative open-source alternatives, removing the need to juggle multiple subscriptions, logins, or browser tabs. This consolidation saves time and provides immediate flexibility to choose the right tool for any specific task without interruption.

Persistent Context Memory

Maintain a continuous conversation thread that persists across different AI models. This groundbreaking feature allows you to start a project with one model for brainstorming, switch to another for detailed analysis or coding, and then move to a third for creative refinement, all while preserving the full context and history of your interaction.

Integrated AI Agent Ecosystem

Go beyond basic chat and tap into a curated library of hundreds of specialized AI agents and tools directly within the platform. These agents are designed for specific tasks—from data analysis and image generation to code debugging and content optimization—providing unparalleled capability without ever leaving your primary workspace.

Enterprise-Grade Privacy & Security

Your conversations and data are protected with a strong commitment to confidentiality. The platform is built with security-first principles, ensuring that your proprietary information, creative work, and sensitive research remain private and secure, giving professionals the confidence to integrate AI into their core workflows.

Use Cases

Agent to Agent Testing Platform

Pre-Production Validation of Customer Service Bots

Before launching a new customer support chatbot or voice assistant, enterprises can use the platform to simulate thousands of customer interactions. This validates intent recognition, escalation logic, policy adherence (e.g., data privacy), and the overall conversational flow, ensuring the agent is ready for live deployment and reduces the risk of brand-damaging failures.

Ensuring Compliance and Reducing Toxicity/Bias

Organizations can proactively test AI agents for unintended bias, toxic responses, or compliance violations. By generating tests from diverse personas and checking for policy breaches, the platform helps mitigate legal, ethical, and reputational risks, ensuring AI interactions are safe, fair, and aligned with corporate and regulatory standards.

Continuous Testing for Agentic AI Pipelines

Integrate the platform into CI/CD pipelines for continuous validation of AI agents. Every time an agent's model, prompts, or knowledge base is updated, autonomous regression tests can run at scale to immediately detect regressions in performance, accuracy, or reasoning, maintaining high quality through rapid development cycles.

Performance Benchmarking Across Modalities

Compare and benchmark the performance of different AI agent models or configurations across chat, voice, and phone modalities. The platform provides detailed, consistent metrics on effectiveness, accuracy, empathy, and professionalism, enabling data-driven decisions to select and optimize the best agent for specific use cases.

AgentSea

Cross-Model Research & Analysis

Researchers and analysts can leverage different AI strengths within one project. Start by gathering broad information with a model known for web knowledge, switch to a highly logical model for data interpretation and hypothesis testing, and finally use a creative model to synthesize findings into a compelling report, all with seamless context transfer.

Iterative Creative Development

Writers, marketers, and creators can develop content through collaborative AI stages. Brainstorm initial concepts with a creative model, refine the draft with a model specializing in tone and grammar, generate supporting visuals or code snippets with specialized agents, and finally optimize the copy for SEO, all in a fluid, uninterrupted workflow.

Complex Technical Problem-Solving

Developers and engineers can tackle multi-faceted technical challenges. Use one model for architectural design and pseudocode, another for writing and debugging actual code in a specific language, and a third for documenting the solution or generating test cases, maintaining a coherent technical thread throughout the entire process.

Streamlined Business Intelligence

Business professionals can consolidate their AI-driven tasks. Generate market summaries, analyze financial data with a specialized agent, create presentation visuals and narratives, and draft client communications, using the most suitable model for each step while building upon a single, consistent knowledge base within the platform.

Overview

About Agent to Agent Testing Platform

Agent to Agent Testing Platform is the first AI-native quality assurance framework specifically engineered for the unique challenges of agentic AI systems. As AI agents—such as chatbots, voice assistants, and phone caller agents—become more autonomous and complex, traditional software testing methods are rendered obsolete. This platform provides a dedicated assurance layer that validates AI behavior in real-world, dynamic environments. It moves beyond simple prompt checks to evaluate full, multi-turn conversations across chat, voice, phone, and multimodal experiences. Designed for enterprises deploying AI at scale, its core value proposition is de-risking production rollouts by proactively uncovering long-tail failures, edge cases, and problematic interaction patterns that manual testing cannot reliably find. By leveraging a team of specialized AI agents to autonomously generate and execute thousands of synthetic user tests, it delivers actionable insights on critical metrics like bias, toxicity, hallucination, and policy compliance, ensuring AI agents perform accurately, reliably, and safely for all end-users.

About AgentSea

AgentSea, now rebranded as Okara.ai, is a sophisticated, unified chat interface designed to be the central command center for your AI interactions. It eliminates the common friction of managing multiple AI tools by providing a single, cohesive environment where you can access the latest and most powerful AI models. This includes everything from industry-standard giants to cutting-edge open-source alternatives. The platform is built for professionals, creators, developers, and anyone who demands efficiency and power from their AI toolkit. Its core value proposition is a seamless, context-aware experience that maintains persistent memory across different models. This allows you to initiate a complex task with one AI and seamlessly switch to another for refinement, specialized analysis, or a different creative perspective without ever losing the thread of your conversation. With a strong commitment to privacy and security ensuring your work remains confidential, and by consolidating access to hundreds of specialized AI agents alongside core language models, AgentSea (Okara.ai) delivers unparalleled capability and flexibility, fundamentally transforming workflows for research, creation, and problem-solving.

Frequently Asked Questions

Agent to Agent Testing Platform FAQ

What makes Agent to Agent Testing different from traditional QA?

Traditional QA is built for deterministic, static software with predictable outputs. AI agents are probabilistic, dynamic, and their behavior evolves through conversation. This platform is AI-native, using other AI agents to test these non-linear, multi-turn interactions for nuances like reasoning, tone, and context-handling that scripted tests cannot evaluate.

What types of AI agents can be tested with this platform?

The platform is designed to test a wide range of AI-powered conversational agents. This includes text-based chatbots, voice assistants (like IVR systems), phone caller agents, and hybrid agents that operate across multiple modalities (text, voice, image). It validates the full agentic system, not just the underlying LLM.

How does the platform generate relevant test scenarios?

It uses a suite of specialized AI agents (e.g., a Personality Tone Agent, Data Privacy Agent) to autonomously create test scenarios. You can also access a pre-built library of hundreds of scenarios or create custom ones by defining requirements or uploading documents (PRDs), ensuring tests are tailored to your agent's specific functions and expected user interactions.

Can I integrate this testing into my existing development workflow?

Yes. The platform seamlessly integrates with TestMu AI's HyperExecute for large-scale cloud execution. This allows you to incorporate autonomous AI agent testing into your CI/CD pipelines, triggering test suites at scale with minimal setup and receiving actionable, detailed evaluation reports within minutes to inform development decisions.

AgentSea FAQ

What is the relationship between AgentSea and Okara.ai?

AgentSea has been rebranded as Okara.ai. The platform you access is the same sophisticated, unified AI interface. The rebrand reflects the evolution and expanded vision of the service, but all core features, functionality, and your user experience remain consistent under the new name.

How does the persistent context memory work?

The platform maintains the full history and context of your conversation in a session. When you switch from one AI model to another, the entire conversation thread is provided to the new model as context. This allows the new AI to understand exactly where you left off, enabling truly seamless collaboration between different models on a single task.

What kind of AI models and agents are available?

Okara.ai (formerly AgentSea) provides access to a curated selection of the most powerful and useful AI models, including leading proprietary LLMs and top open-source alternatives. Furthermore, it integrates hundreds of specialized pre-configured AI agents designed for specific tasks like data analysis, image generation, code review, and content optimization.

Is my data private and secure when using the platform?

Yes. Privacy and security are foundational principles. The platform is built with enterprise-grade safeguards to ensure your conversations, uploaded documents, and generated content remain confidential. Your data is not used to train public models without your explicit consent, allowing for secure handling of sensitive or proprietary information.

Alternatives

Agent to Agent Testing Platform Alternatives

Agent to Agent Testing Platform is a specialized AI-native quality assurance framework for validating autonomous AI agents. It belongs to the AI Assistants and agent testing category, providing a dedicated layer to evaluate multi-turn conversations across chat, voice, phone, and multimodal systems before production. Users may explore alternatives for various reasons, such as budget constraints, specific feature requirements not covered, or a need for a platform that integrates differently with their existing tech stack. The search often stems from a need to find the right balance of depth, scalability, and cost for their unique agentic AI validation challenges. When evaluating alternatives, prioritize solutions that offer comprehensive, multi-turn conversation testing beyond simple prompt checks. Look for capabilities in autonomous test generation, validation of security and compliance policies, and the ability to simulate realistic user interactions at scale to uncover edge cases and long-tail failures effectively.

AgentSea Alternatives

AgentSea is a unified AI assistant platform, designed as a private hub for seamless, context-aware conversations across major AI models. It consolidates access to hundreds of models and specialized tools into a single, secure interface, eliminating the need to manage multiple subscriptions and browser tabs. Users explore alternatives for various reasons, such as specific budget constraints, a need for different feature sets, or platform compatibility requirements. Some may seek a simpler tool for basic tasks, while others require deeper integrations with particular workflows or development environments. When evaluating alternatives, consider the core value of a unified workspace. Key factors include the range of supported AI models, the sophistication of context management between tools, the overall commitment to data privacy, and how well the platform aligns with your specific professional or creative workflow.